Visiomode

An open-source platform for touchscreen-based visuomotor tasks in rodents.

Learn MoreVisiomode is an open-source platform for rodent touchscreen-based visuomotor tasks. It has been designed to promote the use of touchscreens as an accessible option for implementing a variety of visual task paradigms, with flexibility, low-cost and ease-of-use in mind.

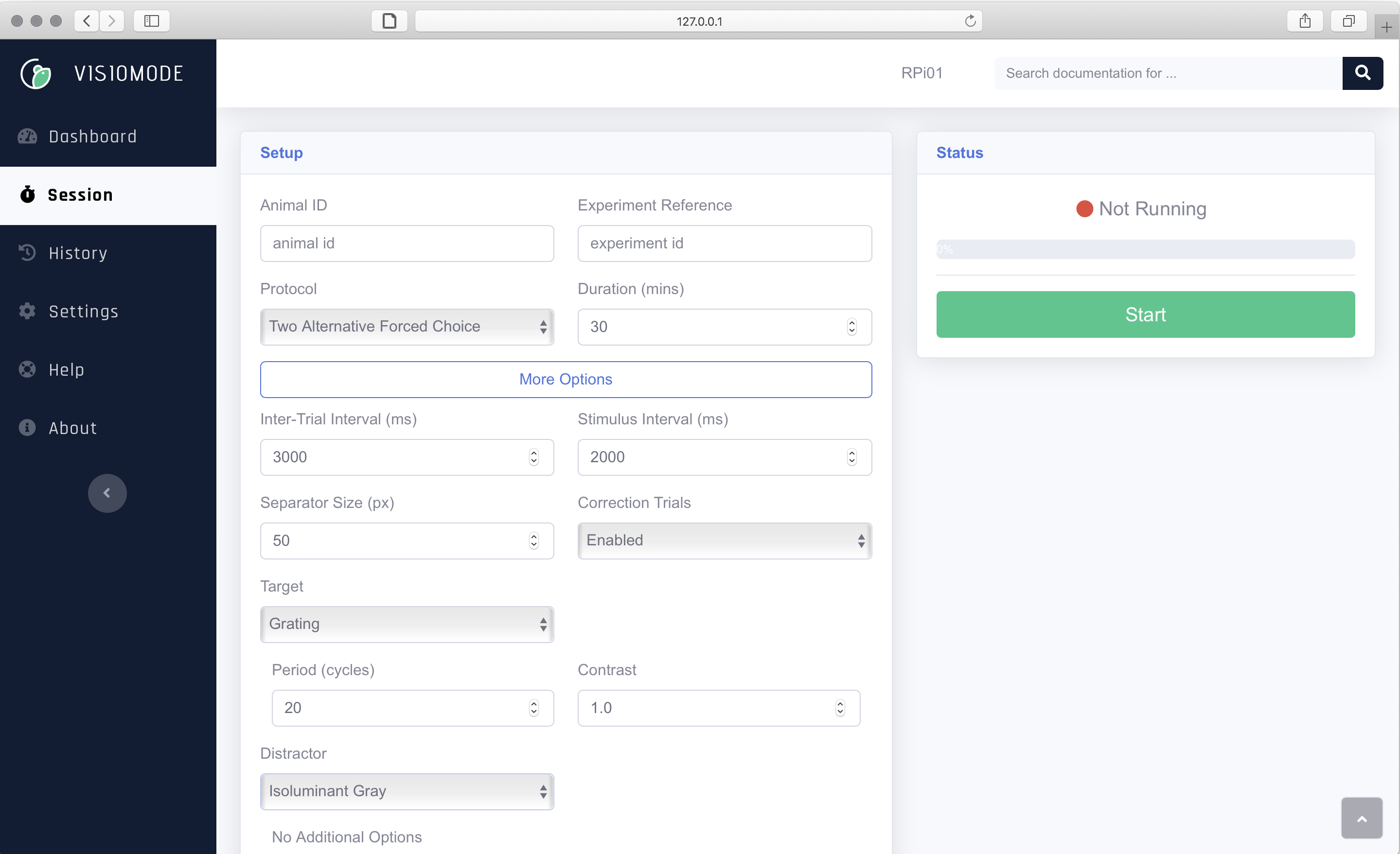

Visiomode is designed for the popular Raspberry Pi computer, and provides the user with an intuitive web interface to design and manage experiments. It can be deployed as a stand-alone cognitive testing solution in both freely-moving and head-restrained environments.

Visiomode provides an open software platform for building touchscreen-based tasks. Everything is designed to run on cheap and readily available electronics.

Accessible web-based UI for researchers to design and run experiments. Web interface can be accessed from any computer on the same network as the machine running Visiomode.

Flexible plugin system allows for easily extending the available experimental paradigms on the fly, without having to recompile or reinstall the software.

Ability to incorporate USB microcontrollers to act as input or output peripherals in different experimental protocols.

Visiomode is fully compatible with the Neurodata Without Borders ecosystem for neurophysiology.

Visiomode is developed by Constantinos Eleftheriou at the Duguid Lab. This work is funded by the Simon's Initiative for the Developing brain, the Wellcome Trust and the University of Edinburgh.